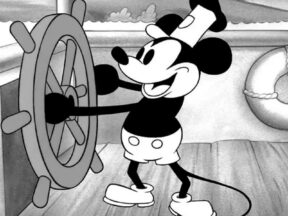

This AI Model Is Trained On Public Domain Stills To Create Mickey Mouse Images

Last month, we ran a piece about some of the most questionable uses of the Mickey Mouse character after Steamboat Willie hit the public domain. Few, if any, though are as off-putting as some of the new AI art of Disney’s spokesmouse that is currently being being created.

Much of the offending Mickey artwork was created by Mickey-1928, an AI model developed by Pierre-Carl Langlais, head of research at Opsci, a French AI research lab.

The model, released just after the turn of the year, can be used to create images of Mickey, Minnie, and Peg Leg Pete based on images taken from a trio of now-public domain 1928 Disney shorts. To train the model, Langlais used a modified version of Stable Diffusion XL and 34 stills from Steamboat Willie, 22 from Plane Crazy, and 40 from The Gallopin’ Gaucho, all of which entered the public domain on January 1.

Image-generating AI models require massive amounts of training data to produce images that satisfy user inputs, and the 96 screenshots used by Langlais have resulted in very low-quality results. The output images also frequently include features that don’t come from Disney shorts. This is because of the Stable Diffusion foundation on which Mickey-1928 was built. Stable Diffusion was trained on mountains of copyrighted data that isn’t yet in the public domain, raising questions about the legality of the entire platform, as well as Mickey-1928 and any other model built on software trained on stolen data.

Lawmakers and the courts are currently debating those questions, and if it is determined that programs trained on copyrighted images have violated those protections, models like Mickey-1928 will almost certainly be as guilty as the foundations on which they’re built.

In the comments section of an article about Mickey-1928 published by Ars Technica, Langlais explained that he’s aware of the line his model may be crossing by using Stable Diffusion and says that the public domain might provide a lot of answers to the ethical and legal questions raised by the emergence of AI tech:

I do agree with the issues of using a model trained on copyrighted content. I’m currently part of a new project to train a French LLM on public domain/open science/free culture sources, not only out of concern for author rights but also to enforce better standards of reproducibility and data provenance in the field. I’m hoping to see similar efforts on diffusion models this year. My general impression is that the copyright extension terms have made impossible an obvious solution to the AI copyright problem: having AI models trained openly on 20th century culture, and thus creating powerful incentives to digitize newspapers, books, movies for the commons.

.png)