Tools Of The Trade: How Buck And Takashi Murakami Used Spark AR To Bring The Artist’s Work To Life

In our Tools Of The Trade series, industry artists and filmmakers speak about their preferred tool on a recent project — be it a digital or physical tool, new or old, deluxe or dirt-cheap. In this installment, Helena Dong joined us to discuss how she and her team at Buck used Spark AR, an augmented reality creation tool from the Meta toolbox, to produce Takashi Murakami’s augmented reality show at The Broad in Los Angeles, open through September 25.

Over to Dong:

Helena Dong: I’m a designer, creative technologist, and art director based in New York City, interweaving conceptual undercurrents from fashion, performance, and augmented reality (ar). Previously I’ve developed and directed ar activations for A24, Coachella, Nike, Prada, Selfridges, and Vogue. Now, alongside a global team at Buck, I collaborate on experiential projects that adopt various immersive technologies.

The Installation

In partnership with Instagram, we created six site- and exhibit-specific ar effects for “Takashi Murakami: Stepping on the Tail of a Rainbow,” which opened at The Broad Museum in May 2022.

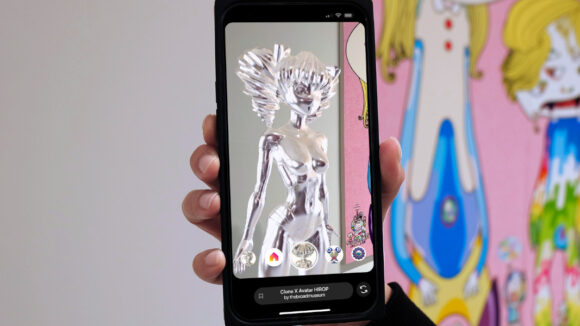

When experienced in the context of Murakami’s exhibition, his first U.S. museum show to have an ar component, these ar effects evolve into installations themselves that are unlocked via custom activation vinyls onsite. Visitors would scan the vinyls to launch Instagram Spark AR effects that use the target-tracking capability to place 3d objects around them.

In all six ar effects we leveraged Murakami’s vibrant, expressive characters to apply physical space with additional layers of richness — including the iconic Ohana Flowers, Mr. DOB, demons “Um” and “A”, Pom & Me, My Lonesome Cowboy and HIROPON — further unifying his artistic vision with the architecture and ethos of The Broad, while supporting the visitor’s journey from the East-West Bank Plaza outside the museum all the way through to the “Land of the Dead” gallery space.

The Tool

Spark AR Studio is an augmented reality creation tool that enables users to build ar effects for an array of platforms within the Meta family, including Instagram, Facebook, Messenger, and Portal. The experiences are primarily targeted at mobile or tablet devices. Spark AR comprises several desktop applications for creation and simulation, and an online portal for publishing and analytics.

Since the launch of its public beta in 2019, Spark AR has democratized the development process so that anyone can create ar effects, regardless of programming experience.

From the perspective of creation, Spark AR allows for high- and low-fidelity prototypes; the tool is flexible and maintains a level of ease in transporting ideas from paper to virtual space. This indeed speeds up our iteration process and allows for feedback to be promptly implemented without much of a disconnect between our creative leads and creative technologists.

Central to the tool is also its outspread availability; making ar experiences obtainable on Instagram enhances reach, even if said experiences are site-specific. By aligning activations with an existing social media platform, most visitors would not need to download additional apps to enjoy the experience. The effects open through the Instagram camera, instantly granting visitors the ability to share what they see.

Within Spark AR, the logic of an effect is often built using a visual scripting system called the Patch Editor, essentially by connecting nodes together. Creators can choose to develop through the Patch Editor or through traditional scripting, or a combination of both. While coding skills aren’t imperative, an understanding of programming logic would be highly beneficial as it’s very much reflected in the arrangement of the nodes. Scripting would also enable you to embed more advanced techniques into your effects.

Examples

Using Spark AR, the Buck Creative Technology team — lead by Kirin Robinson and Daniel Vettorazi — calibrated the effects so that 3d objects would instantiate after the visitor scans the floor vinyl. These objects were precisely arranged in relation to the position and angle of each vinyl.

Patch assets are grouped nodes that optimize our workflow in the Patch Editor, crucial for building advanced ar effects. Kirin and Daniel created a “procedural colorways system” using custom patch assets that allowed us apply twelve different color sets to the same facial animations for the Ohana Flowers. Twelve colorways were condensed into four sprite sheets accommodating eight seconds of animation!

By applying a 3d-scanned model of The Broad’s lobby with an occlusion texture, we were able to carve alcoves into walls where the twin demons stand.

Using the same occlusion technique, the floor opens up to an underground portal with volumetric depth, out of which the Murakami avatars HIROPON and My Lonesome Cowboy emerge.

We set a timer to trigger an animation of 3d flowers blooming beside Mr. DOB, and built a particle system with animated sprites raining down from Mr. DOB’s cloud, showing each individual flower awakening, yawning, and smiling before dissolving away.

Tips

For anyone interested, the Spark AR platform provides an abundance of learning resources, project templates, and reusable assets. In addition to its documentation, Spark also offers a curriculum for pursuing a professional certification.

When I downloaded the software for the first time in September 2019, I had no programming knowledge, nor any understanding of 3d design. My first published ar effect was decidedly plain, with only a single transparent png over the face.

That first step seemed simple enough, but overtime I became cognizant of the sweeping variety of disciplines affiliated with ar, given the spatial and interactive qualities of the medium. For one, an awareness of the 2d and 3d asset pipeline is integral to both the Spark AR workflow and the final experience. This list extends to animation, visual design, scenography, mechanics of play, and beyond… What makes an ar experience immersive and compelling? What techniques elevate its visual fidelity? What configurations are the most appropriate for optimizing real-time performance? These are the kinds of questions that you might ask as you explore the tool, questions that you will need to find answers for when you start using Spark AR professionally.

If you’re unsure of where to begin, I suggest diving into the official documentation and curriculum. Join the Spark AR Community Group on Facebook; it’s a hub for creators at all levels to share projects, exchange insights and learn from each other.

Final Thoughts

Spark AR is a fascinating tool that allows us to annotate the world with a new visual language. As we explore and create with this tool, we need to also hone in on what it is that maximizes the value of ar for people. Sometimes, taking a step back to evaluate the “why” is key to ensuring that our use of nascent technologies is always substantiated within genuine intention. But before we get to that point, I encourage you to simply give it a go with an open mind—a sense of curiosity is all you need to get started.