How Real-time Rendering Is Changing VFX And Animation Production

Over the past few years, there’s been a lot of discussion about the convergence of video games and visual effects. Both mediums tend to rely on the same – or similar – tools these days to generate cg assets and animate them, and the quality of video games is certainly approaching the level of photorealism that can be achieved in vfx, even though games need to render things in milliseconds.

But another much-less-discussed area of that convergence is how video game technology, especially real-time rendering, is actually being used in visual effects (and animation) production.

One company – Epic Games, which makes Unreal Engine – is notable for pushing the capabilities of its software beyond the world of gaming. It has been experimenting in areas that might normally be considered the domain of visual effects and animation: virtual production, digital humans, animated series, and even car commercials. It also just released a real-time compositing tool. Today, Cartoon Brew takes a look at where Unreal Engine is being used in this video game and visual effects cross-over.

What is real-time rendering?

Real-time rendering is really all about interactivity. It means that the images you see – typically in a video game, but also in augmented and virtual reality, and mobile experiences – are rendered fast enough that a user can interact or move around in the environment.

Because complex images tend to take longer to render, real-time rendering has never been quite the same quality as what has been possible with final renderers in vfx and animation using tools such as RenderMan, Arnold, and V-Ray.

But this is changing. Physically plausible effects and ray tracing, once solely the domain of full-blown rendering engines, are often embedded in real-time renderers inside game engines. And that means the results out of game engines, although still not at the level that the mainstream renderers can produce, can be good enough to be part of a robust filmmaking pipeline.

Where visual effects and real-time meet

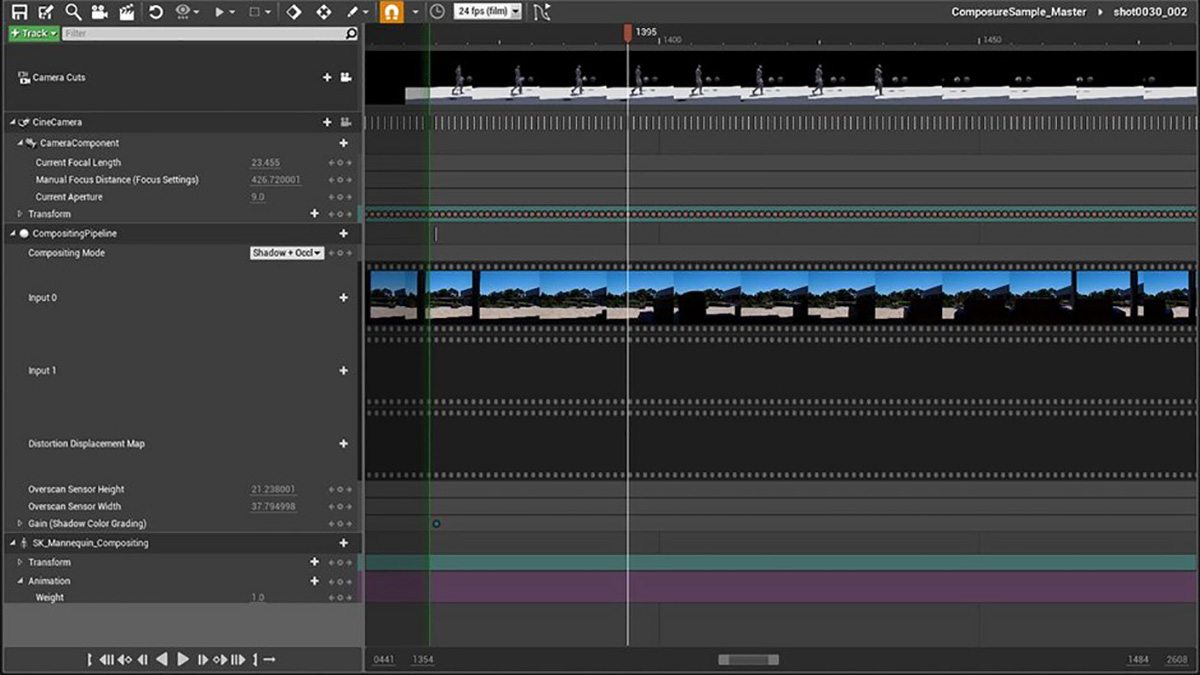

Recent Epic Games demos have showcased what real-time rendering done with Unreal Engine has been able to achieve when used in a visual effects pipeline. Epic has also just recently released a compositing tool, a plugin to Unreal Engine 4.17, called Composure. It allows for cg imagery to be composited – in real-time – against live-action footage, further bringing visual effects concepts into the game engine world.

One of the most notable recent demos was a computer generated Chevrolet short called ‘The Human Race.’ Here, a stand-in car was filmed that could be tracked and immediately replaced with any manner of cg car. The project relied on a proxy vehicle covered in tracking markers called the Blackbird, which was developed by visual effects studio The Mill. The real-time demo was made possible also via The Mill’s Cyclops virtual production toolkit, together with Unreal Engine, to overlay the cg car into live-action footage.

As well as being a way to film a car commercial without the actual final car in the frame, ‘The Human Race’ was also imagined as an augmented reality experience where users could change vehicle characteristics themselves (such as the make or color).

The car commercial demo is an example of the real-time game engine being used for virtual production. This is not a new concept – films such as Avatar, Real Steel, and The Jungle Book all relied on some kind of real-time or ‘simulcam’ system to deliver immediate results to the film crew on set, especially where virtual characters are involved, allowing them to re-frame or make changes on the fly.

An earlier Epic Games demo for Ninja Theory’s video game, Hellblade: Senua’s Sacrifice, used Unreal Engine combined with human performance and facial capture and real-time cinematography and rendering. While still game quality, the Hellblade example did demonstrate how a filmed sequence could be captured, rendered, and edited in real-time.

In terms of animation production, a recent animated trailer for Epic’s video game Fortnite was rendered entirely in real-time in Unreal Engine 4 – it meant that the workflow did not rely on overnight renders and could involve multiple iterations during production. Digital Dimension is also rendering its animated series Zafari in Unreal Engine, although it has developed a hybrid workflow that still relies on traditional cg animation tools such as Maya (more on Zafari in an upcoming Cartoon Brew story).

What does this all mean for making content?

A heavier cross-over between real-time and live-action production, and even animation, likely means more tool choices for artists that will let them see results more quickly. It also means less reliance on render farms, as the scenes are composited in real-time (a powerful standalone computer/processor is still ideal, of course).

Kim Libreri, an Oscar-nominated visual effects supervisor for Poseidon, with experience at ILM and Lucasarts before becoming CTO at Epic Games, suggests the influx of game engines into live action is actually similar to filming things for real in the first place.

“On a film set, you get to the set, you look around, you move the camera and you’re like, ‘Oh, this actually has a cooler angle then we thought,’” Libreri told Cartoon Brew. “You get to experiment. So, what’s similar about a game engine to our real world is that we make simulated worlds, using things like physics engines, and that means you can have all these happy accidents.”

What Libreri is suggesting, here, is that relying on real-time tools can give creators a flexibility in generating cg worlds and characters, and in moving through synthetic environments. “People who play games know that anything can happen in a game, almost,” Libreri said. “Which means we can have these cool things happen and then turn them into stories.”

And since what can be done in visual effects with traditional renderers and what can be done with real-time renderers is getting closer, artists might also choose real-time over traditional rendering when the final results become generally indistinguishable. In Rogue One, for example, a few scenes featuring the droid K-2SO relied on Unreal Engine for rendering (nobody noticed until Epic Games revealed that this had occurred).

The tools available in game production and visual effects are already becoming more similar, too, and that creates a familiarity between the mediums. For example, to get modeling and texturing done in both games and visual effects, artists tend to rely on heavy lifters such as Maya and ZBrush.

“We now use very much the same tools,” Libreri said, in terms of video games and visual effects. “We almost talk the same language, the only difference is that millisecond.”

.png)